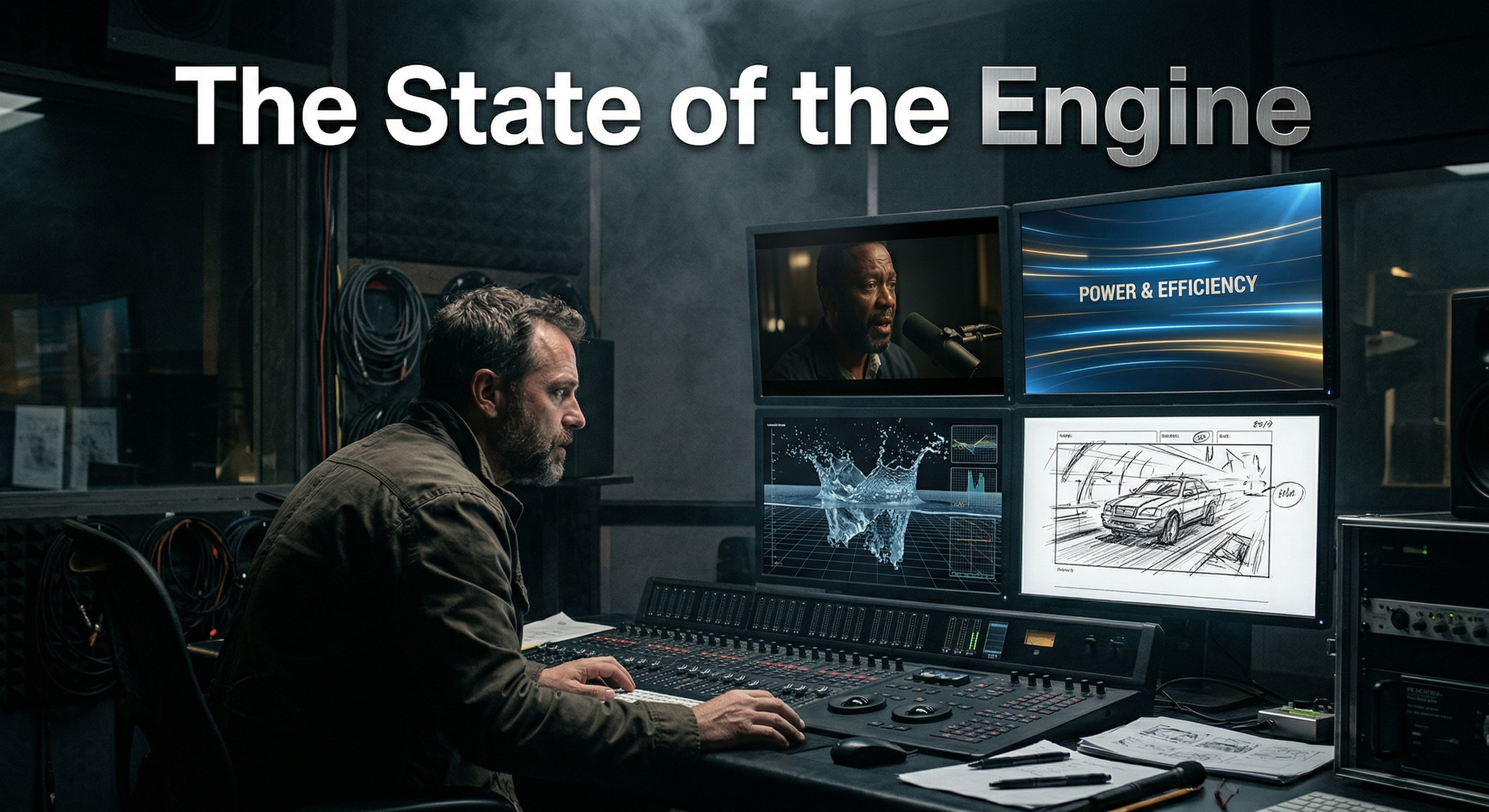

The toy phase is over. AI video is no longer a slot machine for lucky accidents. The serious platforms now compete on control, consistency, and how cleanly they fit into a real production workflow. That changes the job of the studio. The question is no longer which model can make one impressive clip. The question is which engine should handle which shot, under which constraints, with the fewest surprises once the brief turns commercial.

Google Veo 3: The Narrative Powerhouse

Google is pushing realism, prompt adherence, creative control, and native audio as a single package. The model can generate sound effects, ambient noise, and dialogue inside the clip itself. It operates as an eight-second cinematic generator with multiple duration options, reference image guidance, and first-to-last frame transition support. That combination matters because it shifts Veo away from silent visual novelty and toward directed scene construction.

In practical terms, Veo is strongest when the scene lives or dies on instruction density. It rewards detailed shot design, camera direction, and cinematic specificity. That makes Veo unusually good for dialogue-driven character work, short narrative beats, and controlled eight-second scenes where tone, sound, and framing must land together. Its weakness is structural, not visual: you are still building films from short segments, not extended dramatic coverage. But inside that constraint, Veo behaves less like a toy and more like a narrative unit. It currently stands out for short film scenes, branded micro-dramas, and any brief where sound is part of the meaning rather than a post-production layer.

Runway: The Commercial Standard

Runway set the commercial grammar that many teams still trust: descriptive prompting, fine-grained temporal control, precise key-framing, and advanced camera controls inside a workflow designed for repeatable intent rather than happy chaos.

What makes Runway commercially important is not spectacle. It is discipline. The engine was trained on temporally dense captions, and its architecture emphasizes direct prompting, structured scene description, and camera movement control with defined direction and intensity values. That design philosophy gives the engine a practical advantage for B2B work, fintech spots, product films, and social branding where visual stability is more important than surprise. When building a social branding video for a demanding client, you do not use an engine because it might invent something wild. You use Runway when the client has a deck, a moodboard, a compliance team, and absolutely no appetite for drift between take three and take seven.

Kling AI: The Physics Engine

Kling foregrounds camera control, motion control, start and end frame workflows, and dynamic brush masks for steering movement inside image-to-video outputs. That combination tells you exactly what Kling is trying to be: a motion-first engine built for shots where the problem is not just appearance, but interaction.

The engine describes its image-to-video behavior as physics-aware, with motions such as walking and fluid dynamics respecting inertia and gravity. When the brief involves force, contact, material response, or difficult camera movement, Kling is often the engine to test first. Action inserts, impact shots, object interaction, fabric movement, water behavior, and motion-heavy product visuals all benefit from that orientation. The tradeoff is that motion ambition increases the burden on direction. Kling can do more in physical space, but it also demands stronger shot design if you want that energy to read as intentional instead of merely impressive.

Luma Dream Machine: The Rapid Prototyper

Luma operates on a different frequency. Dream Machine is designed to generate cinematic video in minutes, with a broader platform story built around boards, structured visual planning, and fast iteration. The value proposition is blunt: move from script or concept to a structured visual sequence in minutes instead of days. That is why Dream Machine remains strategically important even when another engine may win the final render. It collapses pre-production time.

This is the engine for animatics, concept validation, visual exploration, and early client alignment. It is also useful in post-production, because its video-to-video tooling pushes camera motion, reframing, and environment changes without a full reshoot mentality. The key point is speed married to usefulness. Dream Machine is fast in the part of the pipeline where speed protects the budget by exposing bad ideas early. In a serious studio, that is operational value.

The Multi-Engine Pipeline

There is no single best engine, only the right engine for the shot. Veo is the strongest narrative unit because audio, prompt adherence, and cinematic specificity are built into the same system. Runway remains the commercial safety pick because its grammar of control is disciplined and client-friendly. Kling is the motion specialist when physics and interaction are the real brief. Luma is the rapid prototyper that saves time before the expensive decisions begin.

A modern AI studio does not swear loyalty to one model. It runs a multi-engine pipeline, selecting Veo for a short dramatic scene, Runway for a controlled fintech commercial, Kling for a complex physical action beat, and Luma when the job is to think visually at speed. The future belongs to studios that choose engines the way cinematographers choose lenses: with strict intention, not brand worship.