Abstract: Most failures in AI filmmaking begin with a category error. The models are treated as if they are primitive directors: a prompt goes in, a scene comes out, and the user mistakes local beauty for cinematic structure. This paper examines Henrie Studio’s Synthesis Pipeline: a production method that separates visual composition from temporal generation to defeat narrative collapse.

Individual AI-generated clips may be striking, but the film often collapses under sequence pressure. Faces drift. Wardrobe mutates. Lens language resets from shot to shot. The viewer does not always identify the technical cause, but they feel the fracture immediately. Henrie Studio’s response is what our Creative Lead calls the Synthesis Pipeline.

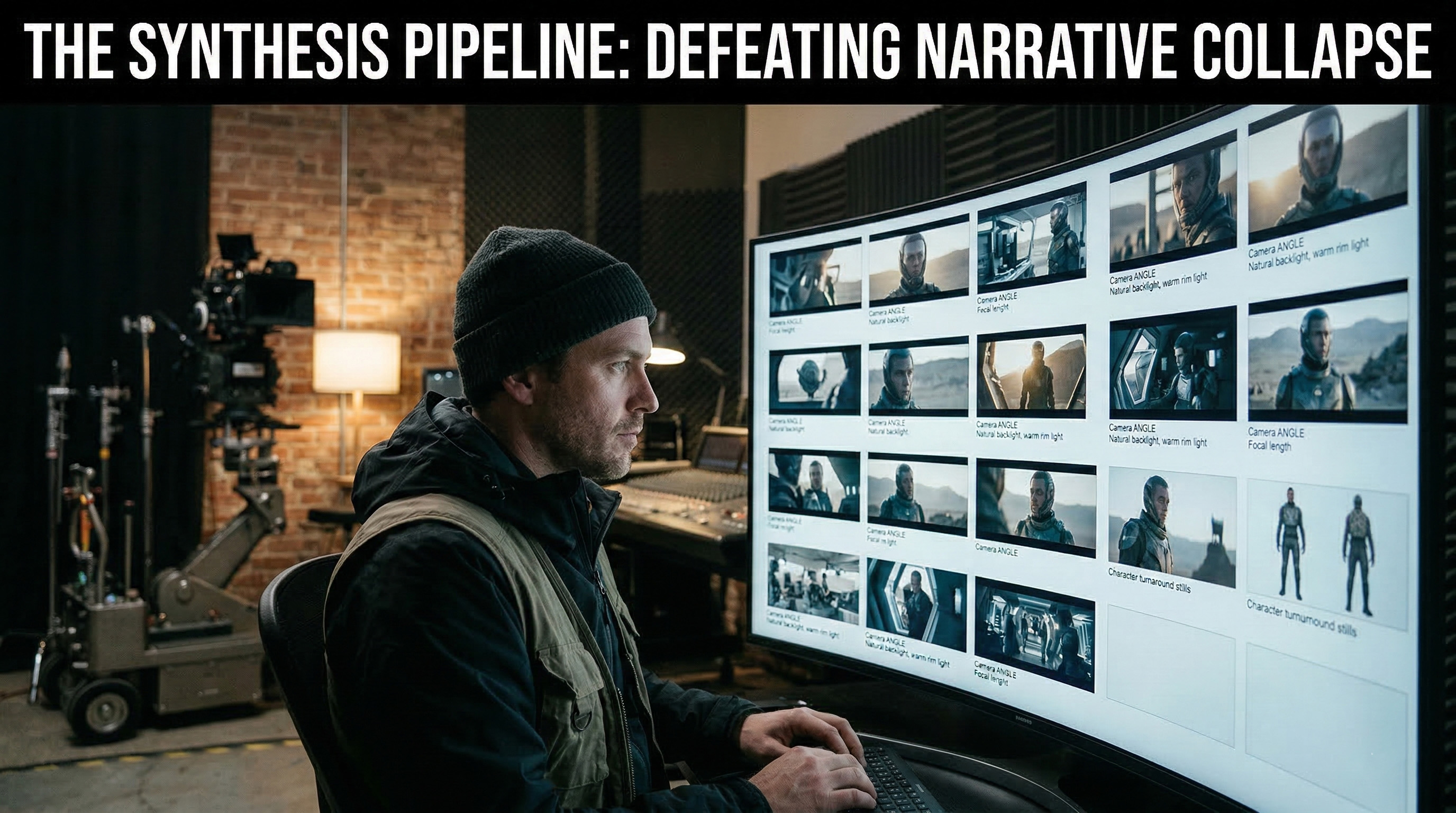

Instead of asking a model to invent framing, identity, environment, motion, and continuity simultaneously, our team breaks the problem into controlled stages. First, the film is composed as a static visual system. Only when the storyboard, character identity, and environmental logic are locked does the studio move into motion generation.

The Challenge: The Illusion of the Prompt

The fantasy of raw prompting is seductive because it compresses craft into syntax. Type a paragraph, receive a moving image, and it appears that cinema has been reduced to language alone. That illusion holds for a few seconds. Then the edit begins.

What breaks is not beauty; beauty is easy. What models cannot reliably preserve is relationship. One shot does not know how to belong to the next. A close-up may imply one facial structure, while the reverse angle quietly rewrites the same character. A wide shot may establish golden-hour backlight, then the insert arrives with a colder sky model and a different sun position.

"If you’re prompting for finished video at the start, you’re asking the model to solve five departments at once. It becomes production designer, DP, wardrobe, casting, motion team, and editor in one pass. That’s not control. That’s surrender with good lighting."

— Creative Lead, Henrie Studio

At Henrie Studio, the term for this failure mode is narrative collapse. The clip still looks expensive; it just no longer belongs to a larger body of work.

The Methodology: Inside the Studio Process

Henrie Studio’s working principle is aggressively simple: never generate video before the static foundation is locked. That rule is not aesthetic dogma; it is error management. We treat every scene as a continuity problem before it becomes an animation problem.

"We do not start with motion. Motion is the last privilege we earn. First, we need proof that the world exists when it is still. If the sequence does not cut as stills, video will not save it. Motion usually hides mistakes for three seconds, then exposes them harder."

— Creative Lead

Professionals delay motion because motion multiplies instability. Once a character begins walking, micro-errors in anatomy and spatial logic propagate across frames. Henrie’s pipeline therefore treats still-image generation as a form of hard constraint programming. The film’s visual physics must be declared before time is introduced.

The Pipeline Breakdown

Phase 1: The Visual Script

The process begins with the visual script. This is the shot list rebuilt as a frame-accurate storyboard, generated image by image until the visual language stops drifting. Camera height, focal compression, and practical light direction are all stabilized in static form. Each shot is resolved as if it were a locked frame from a finished edit.

"The storyboard is where we remove false freedom. Once the angle is correct, I do not want the video model improvising a new one because it ‘felt cinematic.’ Cinema is decisions surviving contact with the next shot."

Phase 2: Identity Locking

After shot composition comes identity locking. The team generates structured turnaround sets for each principal character: front, three-quarter, profile, and rear variants under controlled light. Hairline shape, jaw width, and costume seams are all stress-tested before the character enters motion.

"You don’t lock a character by finding one beautiful image. You lock them by exhausting their weaknesses. Turn them. Break the symmetry. Change the light. See what mutates. Then rebuild until the mutations stop."

Phase 3: Temporal Translation

Only after the visual script and identities are stabilized do we move into temporal translation. The key unit here is modest: short bursts, typically around three seconds. Locked stills are pushed into video systems as motion anchors. Prompts are stripped down to movement description: head turn, fabric response, atmospheric drift.

Because the composition is already solved, the model’s job is reduced. It is no longer designing the shot from scratch; it is translating still logic into time. This allows for error isolation—a failed three-second burst can be discarded without contaminating the scene architecture.

Phase 4: Post-Production and Sound

The final image is not finished when generation stops; it becomes editable material. Motion clips are retimed and speed-ramped to harmonize cadence. Color grading restores cross-shot consistency where model output has shifted gamma or saturation response.

Sound does even heavier lifting. Foley rebuilds material credibility where generated motion feels frictionless. Footsteps, cloth tension, and room tone reintroduce physical consequence.

"AI gives you motion without impact. Post is where we put the mass back in."

Conclusion

The Synthesis Pipeline accepts a hard truth: AI may replace cameras in certain stages, but it does not replace blocking, continuity, editorial judgment, or shot design. It does not abolish discipline; it punishes the lack of it. The machine can generate images. The film still has to be built.