How AI Turns Text Into Video: What It Is, How It Works, and Why It Changes Everything

For most of the history of moving images, making a video required the same basic ingredients: a camera, a location, people in front of and behind the lens, and time—usually a lot of it. A short commercial could consume a full production day. A short film could take weeks or months. Even a basic explainer video often meant coordinating scripts, voiceovers, stock footage, editing software, and revisions across multiple people.

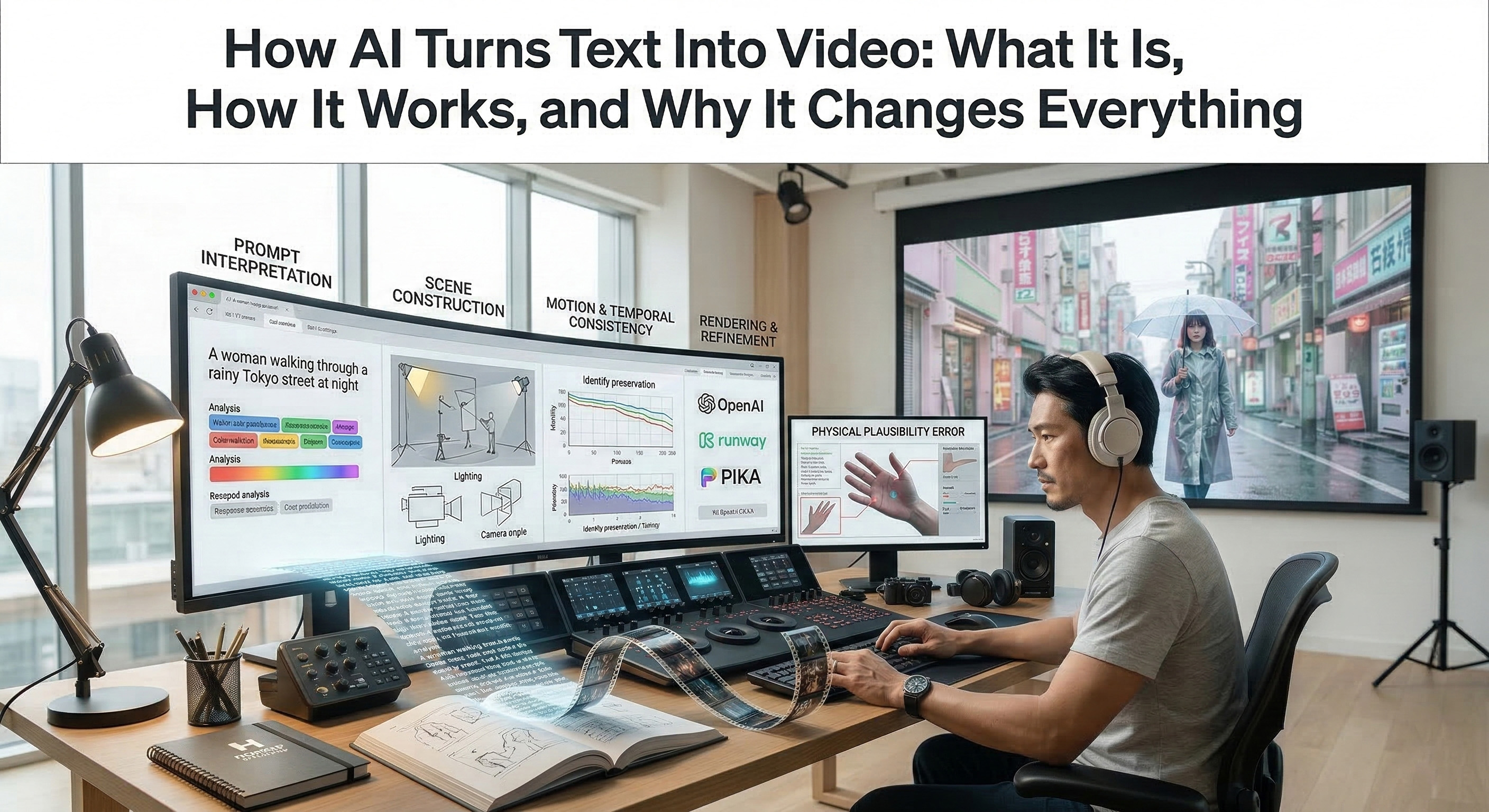

That equation is changing. In just a few years, text-to-video AI has moved from research demos to real products used by creators, studios, and marketing teams. Today, major platforms such as OpenAI’s Sora, Runway, and Pika all offer ways to generate video from text prompts, and the broader field is improving quickly in realism, control, and ease of use.

The core idea is simple: a user writes a prompt in natural language, and the system produces a video clip that attempts to match that description. The technology is still young, uneven, and far from replacing traditional filmmaking. But it is already changing something important: the minimum cost, time, and technical skill required to turn an idea into moving images is falling dramatically. That shift matters not only for filmmakers, but for startups, educators, marketers, and independent creators of all kinds.

What “Text-to-Video” Actually Means

Text-to-video is exactly what the name suggests: a system takes written input and generates a video output. If text-to-image models made it possible to type a description and receive a still image, text-to-video extends that logic across time. Instead of producing one frame, the model must produce many frames that remain visually coherent while also showing plausible motion.

That temporal dimension is what makes video generation substantially harder than image generation. A generated image only needs to look convincing in a single frozen moment. A generated video must maintain the same subject, environment, lighting logic, and motion over a sequence of frames.

In practice, most current consumer-facing tools still generate relatively short clips rather than full scenes or finished films. But even short clips are enough to make the technology useful for concepting, previsualization, social content, product storytelling, and creative experimentation.

How Text-to-Video AI Works, in Simple Terms

The underlying systems are complex, and different companies use different model designs. But from a practical point of view, the workflow can be understood in four broad stages.

1. Prompt Interpretation

The model first converts the user’s prompt into an internal representation of meaning. It tries to identify the subject, action, setting, mood, style, and any implied cinematic cues. A prompt such as “a woman walking through a rainy Tokyo street at night” contains not just objects, but relationships: who is present, what is happening, where it happens, and what the atmosphere should feel like.

2. Scene Construction

The system then begins building a visual scene that matches the prompt. This includes composition, subject placement, lighting cues, environment, and stylistic direction. In effect, the model is deciding what the scene should look like before it decides how that scene should move.

3. Motion and Temporal Consistency

This is the hardest part. A convincing video requires more than individual good-looking frames. The model must preserve identity and structure across time: the same face, the same clothing, the same background logic, and motion that feels continuous rather than flickering or unstable.

4. Rendering and Refinement

The generated sequence is assembled into a playable clip. Depending on the system, additional refinement steps may improve sharpness, motion smoothness, or adherence to the prompt. Some platforms also layer in audio, camera controls, or editing tools as part of a broader workflow.

Examples of Current Tools

The current landscape is moving fast, but a few platforms illustrate where the field stands now.

OpenAI’s Sora is no longer just a research preview. It has evolved into a broader video generation platform with improvements in realism, physical accuracy, controllability, and synchronized sound.

Runway has pushed toward greater control and consistency, with a focus on more stable characters, locations, objects, and stylistic continuity across shots.

Pika remains positioned around accessibility, speed, and social-first creativity. Its platform emphasizes fast generation, playful transformations, and workflows aimed at creators who want to iterate quickly rather than build traditional production pipelines.

The important point is not that one tool has “won.” It is that the entire category is maturing quickly. Over a short period, the industry has moved from eye-catching demos to tools that are already useful in real creative workflows.

What This Changes for Creators

For creators, the biggest change is not that AI instantly produces masterpieces. It is that visual experimentation becomes dramatically cheaper. A solo creator can test multiple visual directions in minutes. A filmmaker can previsualize a scene before investing in live production. A YouTuber can generate supporting visuals for abstract ideas that would otherwise require stock footage, motion graphics, or a dedicated shoot.

This does not make cinematography, editing, or directing obsolete. Storytelling still depends on judgment: pacing, tone, narrative structure, composition, timing, and emotional control. AI does not remove the need for taste. What it removes—at least partially—is the production barrier between an idea and an initial visual draft.

In other words, text-to-video shifts the bottleneck. For many small creators, the limiting factor used to be resources. Increasingly, the limiting factor is clarity of vision: knowing what you want to make, and how to describe it well enough to iterate toward it.

What This Changes for Companies

For companies, especially startups and lean teams, video has often occupied an awkward position: strategically important, but expensive enough to postpone. A concept ad, product story, branded visual, or internal explainer often required outside help or specialized in-house talent.

Text-to-video changes the economics of early-stage content production. Teams can mock up concepts faster, test messaging sooner, and produce rough visual assets before paying for higher-end execution. That is especially valuable in the broad middle tier of content: social clips, internal storytelling, presentations, pitch visuals, experiments, and campaign prototypes.

This does not mean AI video replaces polished brand filmmaking. It means companies can now produce far more visual material before deciding what deserves full production. In practical business terms, that is a meaningful shift in speed and optionality.

Limitations and Risks

The technology remains impressive and unreliable in equal measure.

The first limitation is consistency. Even the best systems still struggle to preserve the same character, wardrobe, background, or exact scene logic across many shots. Tools are improving here, but consistency remains one of the field’s defining challenges.

The second is control. Prompting is powerful, but it is not the same thing as directing. Users often get outputs that are close in spirit but wrong in framing, performance, camera logic, or emotional tone. In many cases, iteration is still required to get usable material.

The third is physical plausibility. Motion has improved, but hands, object interaction, liquids, crowd behavior, and complex cause-and-effect can still fail in noticeable ways.

Finally, there are serious ethical and legal concerns. Video generation raises difficult questions about training data, copyright, consent, impersonation, deepfakes, and misinformation. These are not side issues. They are central to how the technology will be governed, trusted, and deployed at scale.

Where AI Video Is Headed

Exact predictions are risky in a field changing this quickly, but a few directional trends are already visible.

First, control is becoming more important than novelty. Early excitement focused on whether models could generate video at all. The next phase is about whether users can generate the right video with consistency and precision.

Second, video generation is merging with broader creative workflows. Instead of isolated clip generators, platforms are becoming more like AI-assisted studios: generating scenes, refining shots, adding sound, and fitting into iterative production pipelines.

Third, the cultural definition of video production is expanding. More people can now make moving images without traditional equipment, crews, or formal technical training. That does not erase the gap between amateur and expert work. But it does raise the baseline of what one person or one small team can produce.

Conclusion

Text-to-video AI is not a finished medium. It is an emerging capability—uneven, rapidly evolving, and already useful in very real ways. It does not replace filmmaking, and it does not eliminate the human judgment that makes visual storytelling meaningful.

What it does is lower one of the oldest barriers in media production: the barrier between imagining a scene and seeing it. For creators, startups, educators, and brands, that is not a gimmick. It is a structural change in how ideas can become visuals.

The more interesting question is no longer whether AI can generate video. It clearly can. The real question is what creators, companies, and audiences will choose to do with that new power.